- Url extractor from multiple website how to#

- Url extractor from multiple website portable#

- Url extractor from multiple website software#

- Url extractor from multiple website code#

A Free and Powerful Web Scraper First up, you will need the right web scraper to tackle this task.

Url extractor from multiple website how to#

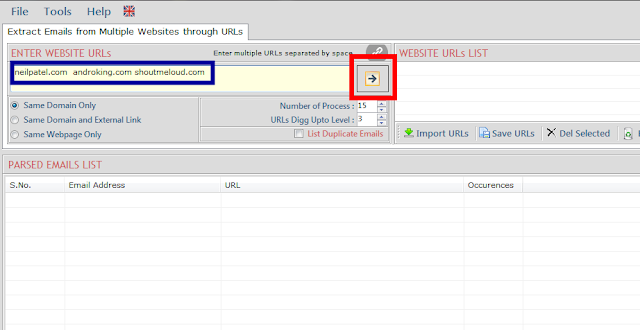

Today, we will go over how to set up a web scraper to extract data from multiple different URLs. For example, you might be trying to extract data from multiple different URLs from the same website. Soup = Soup(ntent, "html.parser")Īrticle_urls = list(map(itemgetter("href"), soup. Web Scraping projects can get quite complex. Play around with the pageNumber and ppp key-value pairs in the data payload dictionary to get different articles: def main(): Head to the Data tab in the ribbon and press the From Web button under the Get & Transform section.

This way we can apply the query to each URL in a list of all the URL’s. We will then turn this into a function query where the input is an event page URL. You can copy the POST payload from your browser's dev tools as well. First, we will create a query to extract the data on one page. If you log your browser's network traffic, you can see that pressing the Show more button makes an XHR Request to via HTTP POST, and the response is HTML. It is useful to build advanced scrapers that crawl every page of a certain website to.

Url extractor from multiple website code#

Do you have any idea of what I could use and change in my code to obtain all links of the page ? Extracting all links of a web page is a common task among web scrapers. Some people suggest to use Selenium but I couldn't find example of a similar application that I have. Soup = BeautifulSoup(response.text,'lxml') As a human, youre probably pretty good at telling a product page from a news article. Url = '' #+ str(i) <- for webpage with many pages Extract Content From Websites Automatically Reads Websites like Humans. I would like to apply it to a new website but which has only a single page but with a "show more" button.

Url extractor from multiple website portable#

Hidden data, support, hash, analysis, ads, password, reboot, system, pc details, reports, file analysis, tor, tor detection, browser cache, images, movies, browser urls, cache, urlsĮmail extractor, email validator, duplicate remover, email grabber, clipboard extract, clipboard email extract, grab email, extract email address, emailid extract, filter emails, extract email from clipboard, capture email, extract email websiteĥ PCFerret Pro / PCFerret Pro Portable v.2.I'm quite new in webscraping field, I previously used a code to extract urls from website containing multiple pages and then save them in a txt file. Simply enter the URLs, one per line, in the textarea below and press the button. Importing, www, web-sites, websites, spreadsheet, exporting, external, query, webpage, urls, grab, extracting Multi URL Opener, Bulk URL Opener, Open Multiple URLs at Once IPVoid Multi URL Opener With this online URL opener you can open multiple URLs or links at the same time in new tabs, an easy way to open multiple URLs throught your web browser.

Url extractor from multiple website software#

net data extraction, extracting emails, extract urls, extract page links, extract image paths, extract ip addresses, extract fax numbersĢ Excel Import Multiple Web Sites Software v.7.0 Octoparse can scrape data from multiple web pages that share similar layout or many website URLs that are organized as a logical sequence by using URL list.